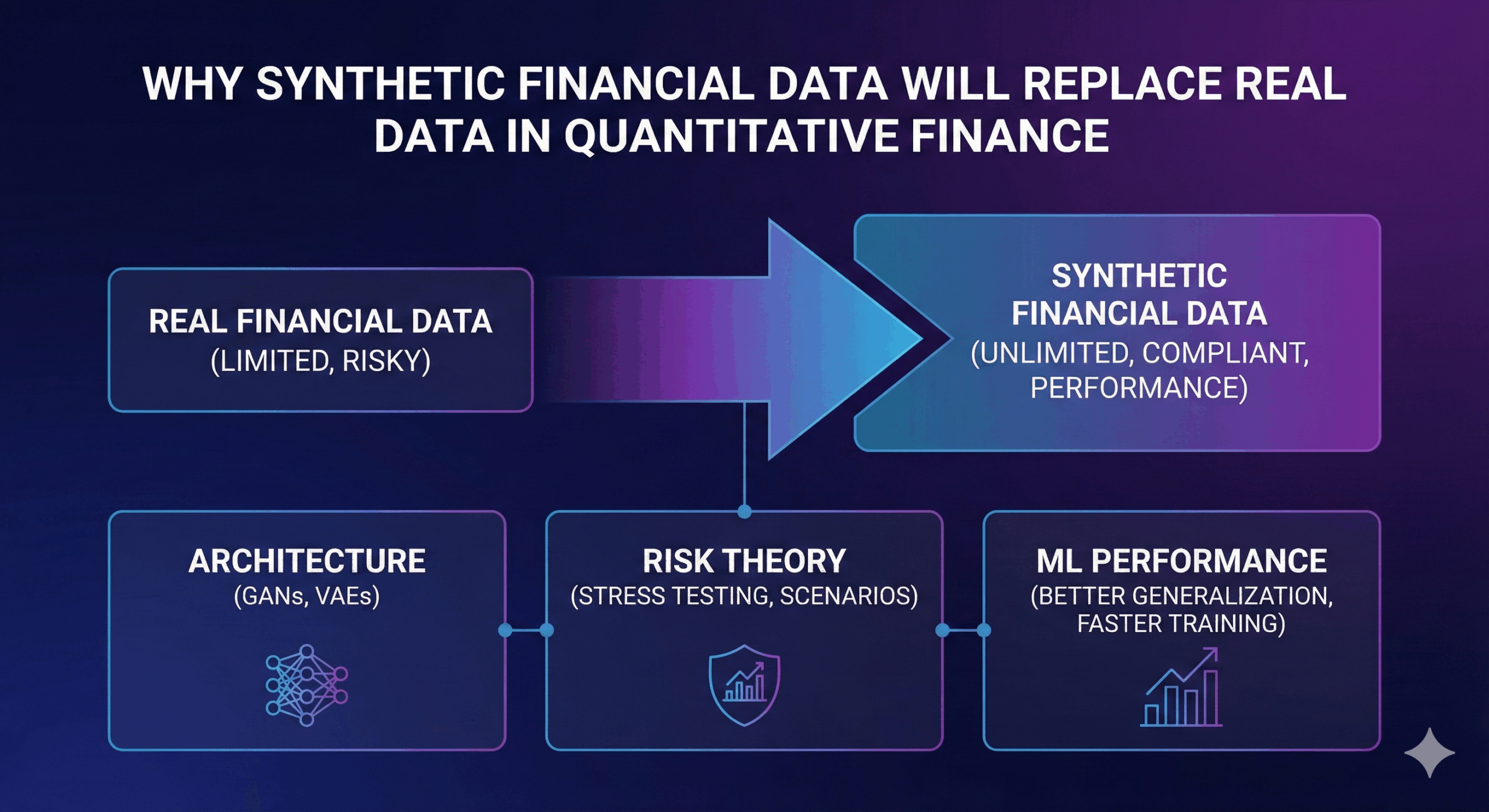

The year 2025 marks the moment when quantitative finance begins to separate itself from the historical dependency on real datasets. What is happening now is not evolution, but replacement. Financial institutions are discovering that synthetic financial data generation addresses the fundamental weaknesses of real-world datasets: limited access, privacy constraints, non-stationarity, uneven regimes and the heavy regulatory friction that slows down every ML workflow. The irony is that the more advanced financial models become, the less compatible they are with traditional approaches to data acquisition. Machine learning thrives on abundance, continuity and structural consistency — while financial datasets are fragmented, noisy and trapped in compliance frameworks. Synthetic data resolves this contradiction by producing datasets that behave like financial systems rather than like spreadsheets extracted from operational databases.

Architectural foundations: why finance-first generative models outperform generic GANs

The breakthrough appears when strategies shift. Specifically, synthetic financial data generation must stop relying on general-purpose AI. Instead, it must shift to finance-specific architectures. Northhaven Analytics demonstrates this difference clearly.

Instead of using a simple GAN, we innovate. Specifically, we do not just mimic table distributions. Rather, the model captures generative processes. These shape financial behaviour.

Its kernel is CTGAN-derived. It handles mixed-type variables. Moreover, it does so with mathematical sensitivity. In addition, convolutional layers record patterns. For example, long-horizon transaction sequences. Also, credit dynamics and behavioural cycles.

The discriminator functions differently. It acts more like a financial auditor. It does not act as a binary classifier. Instead, it identifies violations of logic. Consequently, it rejects samples. Specifically, those contradicting credit theory. Or, risk progression and temporal consistency.

Because of this, the model is unique. It does not generate “fake data”. Rather, it generates plausible financial behaviour. Ultimately, this is rooted in structural relationships. (See our Synthetic Banking Datasets Engine).

The non-stationarity problem and how synthetic datasets bypass historical bias

A central flaw exists in real financial data. Specifically, it represents the past world. It does not show what could be. For instance, economic regimes shift. Also, interest-rate cycles reshape behaviour. Moreover, liquidity structures collapse. Consequently, credit risk evolves. This invalidates historical assumptions.

When ML models use these datasets, they fail. Inevitably, they learn old patterns. These belong to a specific temporal fragment.

However, synthetic financial data generation changes this. It allows institutions to reconstruct mechanisms. Specifically, the mechanisms of financial behaviour. Therefore, they stop memorizing history.

Synthetic datasets can represent alternative regimes. Also, they show adjusted risk dynamics. In addition, they model counterfactual behaviour. Consequently, they are more robust. In this context, synthetic data is unique. It is not a substitute for history. Rather, it is a higher-resolution abstraction. (Read about AI Risk Modelling Datasets).

Model performance: why synthetic data often matches or exceeds real training datasets

There is a widely held assumption. Specifically, that synthetic datasets perform worse. However, in finance, the opposite is true. When generation relies on logic, results improve. Models trained on synthetic data excel. Frequently, they achieve equal accuracy. Sometimes, even greater accuracy than real datasets.

This happens because synthetic data cleans noise. Specifically, it removes operational noise. Also, it eliminates survivorship bias. Furthermore, it fixes incomplete records. Finally, it removes legacy distortions.

What remains is a clean representation. It shows true financial behaviour. Moreover, essential correlations are preserved.

Consequently, quants gain freedom. They can run massive searches. Also, they test alternative architectures. Ultimately, they build robust predictors. Crucially, they do this without waiting. There are no approvals or anonymization delays. Thus, the tempo of research shifts. It moves from compliance-driven to model-driven. (Start with a Free Demo Dataset).

Risk modelling transformed by counterfactual synthetic economies

One of the least discussed advantages of synthetic datasets is their ability to express financial behaviour that has never occurred but is entirely plausible within economic theory. Real datasets rarely contain extreme events. They do not offer enough credit cascades, liquidity freezes, systemic contagion or multi-factor behavioural shocks for meaningful stress testing. Synthetic financial data generation solves this by enabling the construction of entire synthetic economies calibrated to realistic structural relationships but free from the narrow limitations of history. Risk teams can evaluate models against thousands of possible futures rather than a single past. This capability fundamentally changes portfolio stress testing, regulatory modelling and capital adequacy analysis. Instead of “what happened,” synthetic systems allow institutions to model “what could happen,” which is infinitely more valuable.

Regulatory implications: why synthetic data is the only compliant pathway to scalable financial AI

The regulatory argument is straightforward but decisive. Financial institutions operate under GDPR, banking secrecy and internal data-governance frameworks that severely limit access to granular customer information. Even anonymized datasets often remain high-risk because anonymization is reversible under certain conditions. Synthetic datasets produced through finance-first generative modelling remove this risk entirely. They contain no personal data, no recoverable identifiers and no traces of real individuals. For the first time, compliance and innovation stop being mutually exclusive. Quants, ML engineers and risk analysts gain unrestricted access to high-resolution data without entering regulatory conflict. This alone positions synthetic data not as an alternative but as the inevitable future of financial modelling.

Northhaven Analytics at the center of the quantitative data revolution

Northhaven Analytics represents the moment where synthetic data becomes not only technically feasible but practically superior to real datasets. By designing a generative engine that captures financial realism at the architectural level rather than through superficial pattern imitation, Northhaven offers institutions a dataset source that is more stable, more flexible and more mathematically coherent than the operational data stored in banking systems. Synthetic financial data generation is no longer an experiment. It is becoming the infrastructure layer on which the next decade of financial AI will be built.