Telecom Data in 2026: How AI and Synthetic Data Are Transforming Telecommunications

Mobile network operators are sitting on the most valuable and most underutilised asset in enterprise history. Telecom data — subscriber behaviour, network performance logs, billing records, churn signals — holds the key to the next decade of telco profitability. The problem: it’s fragmented, privacy-constrained, and almost impossible to use safely at scale. Until now.

The telecommunications industry generates more data per second than almost any other sector on the planet. Every call, every data packet, every dropped connection, every subscriber interaction — it all flows into systems that are, paradoxically, too fragmented to use effectively. The promise of AI-powered, autonomous network management, personalised customer experiences, and predictive maintenance has been held hostage by one fundamental problem: telecom data sits in silos, locked behind privacy regulations, legacy architectures, and organisational barriers that make it nearly impossible to unify, share, or train models on safely. Northhaven Analytics was built to solve exactly this problem — by generating synthetic telecom data that is mathematically faithful, infinitely scalable, and contains zero real customer information.

The State of Telecom Data in 2026

The telecom industry is undergoing its most significant transformation since the introduction of the smartphone. The convergence of 5G network rollout, edge computing, and agentic AI applications has created an environment where data is both the most critical resource and the most constrained one. Mobile network operators — from AT&T and Comcast to regional telcos across Europe and Asia — are racing to leverage their vast reserves of network data, subscriber behaviour logs, and operational telemetry. But the infrastructure to actually use that data safely and at scale has lagged far behind.

According to industry research, the average large telecommunications provider manages data across more than 40 disparate systems — billing platforms, network management tools, CRM databases, BSS/OSS stacks, and third-party vendor feeds. These data silos don’t just create operational inefficiency; they actively block the AI and machine learning capabilities that modern telcos need to compete. When you cannot unify data across these systems safely, you cannot build the predictive models that drive revenue, reduce churn, or optimise network performance at the speed the market demands.

Why Telecom Data Is Different

Unlike financial data or retail transaction data, telco data carries a unique combination of sensitivity and complexity. A single subscriber record might contain location history, communication patterns, device identifiers, payment behaviour, and network quality experience — all of which are subject to stringent privacy regulation under GDPR, CCPA, and sector-specific frameworks. At the same time, this data contains the most granular, high-frequency signals of human behaviour available to any industry. The tension between its value and its sensitivity is precisely what has made it so difficult to leverage at scale.

Network data alone from a single mobile network operator with 50 million subscribers generates approximately 2.5 petabytes of raw telemetry per day. Of this, industry estimates suggest less than 3% is currently used for AI or analytics workloads. The rest sits in storage, legally inaccessible for training purposes without complex anonymisation pipelines — or it’s simply discarded.

The real bottleneck is not storage or compute — it’s the inability to safely unify data across systems and expose it to the analytics and machine learning pipelines that would create value. This is where synthetic data changes everything.

Data Silos, Data Security, and the Governance Gap

The single biggest barrier to data and AI adoption in the telecom industry is not technology — it is governance. Telcos hold extraordinarily sensitive customer data: call detail records, SMS metadata, location traces, payment history, device fingerprints. A single data breach in this context is not merely a reputational risk — it is a regulatory catastrophe that can cost hundreds of millions in fines and permanently damage subscriber trust.

The result is a paralysis that affects the entire data ecosystem. Teams that want to build churn prediction models cannot access production subscriber records. Network engineers who need to train anomaly detection systems on real traffic patterns cannot expose that data to external tools. Data science teams building personalised customer experiences are handed sanitised, months-old extracts that bear little resemblance to the live data they need. The innovation velocity of the entire organisation is throttled by the correct but costly impulse to protect data security.

The Compliance Architecture Problem

Modern telco data environments are built around access controls designed to prevent misuse — but these same controls create bottlenecks that slow legitimate AI development to a crawl. Data governance frameworks, while essential for protecting subscriber privacy and regulatory compliance, were largely designed in an era before machine learning and big data analytics. They were not built to accommodate the rapid iteration cycles that AI development requires.

The result is a deeply ironic situation: telcos that are investing hundreds of millions in AI infrastructure simultaneously find that the data those AI systems need is locked behind governance processes that may take longer than the product development cycle itself. Platforms like Snowflake have helped organisations begin to centralise and streamline data management, but even cloud-native data platforms do not solve the fundamental problem of what can legally be put into them — and what can be shared across organisational boundaries.

In a 2025 survey of 200 senior data executives at telecom firms, 74% reported that their data governance policies actively prevented at least two high-priority AI projects from launching on schedule. The same executives rated data governance as their top strategic priority — creating a situation where the solution to data risk is itself creating data risk through missed innovation opportunities.

How Northhaven Analytics Generates Synthetic Telecom Data

We generate synthetic telecom data at enterprise scale

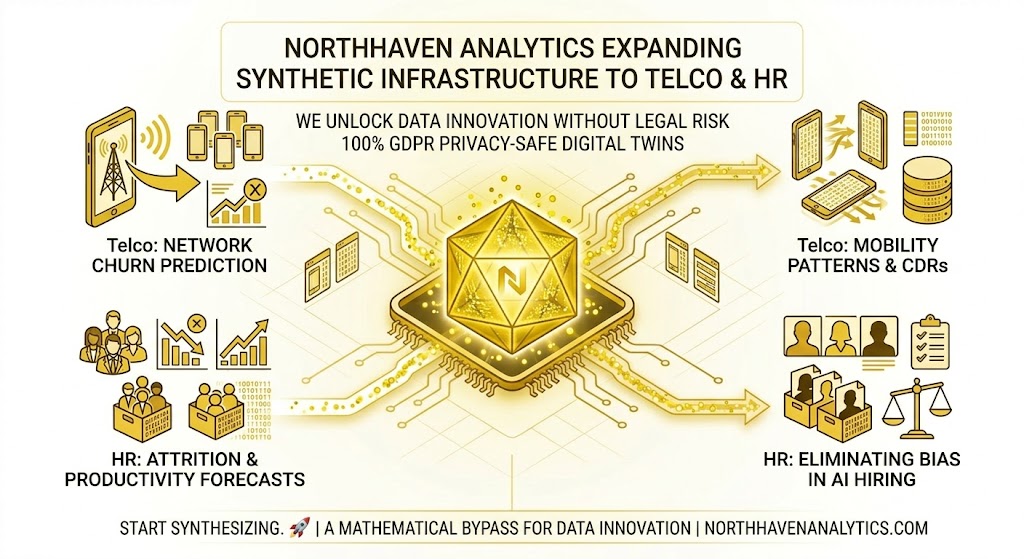

Northhaven Analytics has expanded its synthetic data capabilities to the telecommunications sector. Building on our proven infrastructure in financial services and MedTech, we now generate mathematically precise synthetic datasets that replicate the statistical properties, correlations, and behavioural patterns of real telecom data — without containing a single real subscriber record. From call detail records and network telemetry to subscriber churn signals and 5G traffic profiles, our synthetic datasets are production-ready and deployable in weeks.

Synthetic data is not a workaround or a compromise — it is an AI-driven methodology that produces datasets with provably equivalent statistical properties to real data, generated from scratch using generative models trained on the structural characteristics of real telecom systems. At Northhaven, we use a combination of machine learning architectures including Generative Adversarial Networks (GANs), Variational Autoencoders (VAEs), and copula-based dependency models to ensure that the synthetic telecom data we produce is faithful to the real data it represents — including its tail events, its correlations, and its temporal dynamics.

What Synthetic Telecom Data Looks Like in Practice

The synthetic telecom datasets Northhaven generates are indistinguishable from real data in terms of their statistical properties — but completely safe to share, store, and train models on. A synthetic subscriber dataset, for example, will contain the same distribution of usage patterns, the same correlation between data usage and churn propensity, the same seasonal variations in call volumes, and the same rare event frequencies as the real subscriber base — without any of the actual subscriber records from which those patterns were derived.

This is critical for use cases like fraud detection, where the model needs to learn from rare but high-impact events that are systematically underrepresented in anonymised datasets. Our generation pipeline can deliberately accelerate the representation of rare events — SIM swap fraud patterns, network anomalies indicative of cyber attacks, tower failure precursor signals — ensuring that models trained on Northhaven synthetic data are more robust than those trained on imperfectly anonymised real data.

Northhaven can generate synthetic telecom datasets of any scale — from targeted 10,000-row proof-of-concept samples to multi-billion-record enterprise data lakes — in days, not months. Every record statistically equivalent to real subscriber data. Zero PII. Full GDPR compliance from day one.

Use Cases: Where Synthetic Telecom Data Creates Value

The range of use cases for synthetic telecom data spans every function in the modern telco — from network operations and cybersecurity to customer care, product development, and strategic planning. Below we examine the eight highest-impact applications that Northhaven Analytics is actively supporting across the telecom industry.

Churn is the single most costly problem in the telecom industry, costing operators an estimated $1,000–$4,000 per lost subscriber when acquisition costs are factored in. Building effective churn prediction models requires access to rich, longitudinal subscriber behaviour data — exactly the kind of data that is most sensitive and most difficult to use.

Northhaven generates synthetic subscriber datasets that faithfully replicate churn behavioural signals: declining usage patterns, increasing support contacts, competitor trial activity, payment delays. Models trained on our synthetic data achieve comparable predictive accuracy to those trained on real data — with zero regulatory exposure and full auditability.

Network performance is the core product of every telco. Predictive maintenance, anomaly detection, and autonomous network management all require access to high-resolution telemetry data from network equipment — data that is both highly sensitive and extraordinarily voluminous. Training AI models to predict failures before they occur requires examples of failure modes that may happen only once every five years in a real network.

Northhaven synthetic network telemetry datasets deliberately over-represent rare failure modes — tower degradation signals, backhaul congestion patterns, spectrum interference signatures — ensuring that predictive models are trained on the full range of scenarios they will encounter in production, including edge cases that real historical data may not contain.

Telecom fraud costs the industry over $40 billion annually. SIM swap attacks, subscription fraud, international revenue share fraud (IRSF), and bypass fraud are among the most damaging — and hardest to detect — because they mimic legitimate behaviour at the signal level. Training effective AI-powered fraud detection systems requires access to both normal and fraudulent call patterns, billing behaviours, and network access sequences.

Northhaven generates synthetic fraud datasets with configurable fraud prevalence rates, realistic fraud pattern signatures, and full temporal dynamics — giving cybersecurity and fraud teams the training data they need without exposing real subscriber records or real fraud investigation data.

5G network deployment requires operators to model demand patterns, spectrum utilisation, and interference scenarios across thousands of potential cell site configurations before committing to infrastructure investment. Real traffic data from 4G networks provides only a partial picture — 5G introduces new use cases (massive IoT, network slicing, ultra-low latency applications) for which historical data simply does not exist.

Northhaven generates synthetic 5G traffic profiles that model the demand patterns of smart city deployments, industrial IoT, and autonomous vehicle connectivity — giving network planning teams the data they need to optimize operations and make informed decisions about deployment sequencing and network architecture.

Enhance customer experiences — but not by accessing real customer data. The paradox of personalisation in telecoms is that the data needed to deliver truly personalised offers, proactive service alerts, and customer care interventions is the most privacy-sensitive data the operator holds. Building AI systems that can deliver personalised customer experiences requires training on rich behavioural data that simply cannot be exposed in its raw form.

Northhaven’s synthetic subscriber profiles replicate the full spectrum of customer behaviour segments — high-value data users, voice-primary segments, at-risk prepaid subscribers, business accounts — enabling personalisation models to be built, tested, and validated without a single real customer record entering the training pipeline.

Cybersecurity teams at telcos face a unique challenge: they need to train AI-based threat detection systems on realistic attack patterns — but exposing real network traffic to security research environments creates its own risks. Data breach scenarios, DDoS attack signatures, and authentication anomaly patterns all require realistic underlying data to be useful for model training.

Northhaven generates synthetic network security datasets with configurable attack scenarios embedded within realistic baseline traffic — enabling cyber defence teams to train, test, and red-team their detection systems against realistic threat patterns without exposing live network data to research environments or external tools.

AI Innovation in Telecoms: From Analytics to Autonomous Networks

The analytics maturity journey in the telecom industry follows a well-documented progression: from descriptive analytics (what happened?) through diagnostic analytics (why did it happen?), predictive analytics (what will happen?), and finally to prescriptive and autonomous systems (what should we do, and do it). The majority of telcos today are stuck between descriptive and diagnostic — not because they lack the AI capability or the ambition, but because they cannot access the data required to move forward.

The Path to the Autonomous Network

The concept of the autonomous network — where AI systems can self-configure, self-heal, and self-optimise without human intervention — has been the stated ambition of every major mobile network operator for the past five years. The technical architecture to support it exists: cloud-native network functions, open RAN interfaces, real-time telemetry streaming, and AI inference at the edge. What has been missing is the training data to build the AI systems that would sit at the heart of it.

An autonomous network management system needs to have been trained on thousands of failure scenarios, thousands of demand surge patterns, thousands of interference events — scenarios that may each occur once per decade in a real network. Synthetic data solves this by allowing the generation of arbitrarily large, arbitrarily varied training datasets that cover the complete distribution of operational scenarios that an autonomous system might encounter in production.

The emergence of agentic AI applications — AI systems that can take autonomous actions in response to observed conditions — is accelerating the demand for high-quality training data in telecoms. Agentic network management systems that can drive automation across the full network operations stack require training data that covers the edge cases and compound failure scenarios that are most difficult to obtain from real operational data.

Snowflake, Data Mesh, and the Modern Telecom Data Stack

The data management landscape in telecoms has evolved significantly with the adoption of cloud-native platforms. Snowflake, Databricks, and Google BigQuery have become the default infrastructure for centralising telecom data — and they have dramatically lowered the cost of building centralised data platforms that can serve as the foundation for AI workloads. However, these platforms solve the infrastructure problem, not the data access problem. They can store and query petabytes of subscriber data efficiently — but they cannot make it legal or safe to put sensitive subscriber records into them without the appropriate governance and anonymisation controls.

Northhaven synthetic telecom datasets are cloud-native by design. They can be deployed directly into Snowflake environments, S3 data lakes, or any modern data platform without modification — and because they contain no real subscriber data, they can be shared across organisational boundaries, accessed by third-party analytics teams, and stored without the data retention restrictions that apply to real subscriber records. They unify the data accessibility of modern platforms with the privacy protection that telecom regulations require.

Data Security and Governance in the Telecom Ecosystem

For telecommunications operators, data security is not a feature — it is an existential requirement. The regulatory environment surrounding telecom subscriber data is among the strictest in any industry: GDPR in Europe, CCPA in California, the Telecommunications Act in the US, and a growing body of sector-specific cybersecurity mandates from regulators including the FCC, Ofcom, and BEREC. A data breach involving subscriber records does not just trigger financial penalties — it triggers regulatory investigations, licence reviews, and potentially criminal liability for executives.

The conventional approach to managing this risk has been to restrict data access as tightly as possible — creating the data silos that prevent AI innovation. The alternative approach that Northhaven enables is to replace the real data with synthetic data that is structurally and statistically equivalent, removing the regulatory exposure entirely while preserving full analytical value.

| Regulation | Jurisdiction | Key Telecom Data Constraint | Synthetic Data Impact |

|---|---|---|---|

| GDPR | European Union | Subscriber data cannot be processed without explicit consent or legitimate interest | Eliminated — synthetic data is not personal data |

| CCPA / CPRA | California, USA | Consumers have right to deletion; sharing subscriber data with third parties restricted | Eliminated — no real records to delete or restrict |

| Telecom Act §222 | USA | CPNI (Customer Proprietary Network Information) strictly protected | Eliminated — synthetic CPNI-equivalent data is GDPR/FCC-clean |

| NIS2 Directive | European Union | Network and information security requirements for critical infrastructure operators | Simplified — reduced data attack surface |

| DORA | European Union | Operational resilience testing requires realistic data for simulations | Enabled — synthetic data ideal for resilience testing |

Best-in-Class Security by Design

The security posture of a synthetic data approach is fundamentally stronger than that of an anonymised real data approach — for a simple reason: best-in-class security means not having the data in the first place. Anonymised data can be re-identified through linkage attacks, auxiliary data sources, or simple inference — a risk that grows as external datasets become richer and more accessible. Synthetic data generated by Northhaven contains no records derived from real individuals and cannot be de-anonymised, because there is nothing to de-anonymise. It is statistically equivalent to real data, but structurally independent of it.

This distinction is increasingly important as regulators develop more sophisticated frameworks for evaluating anonymisation quality. The UK ICO, the EDPB, and the FTC have all issued guidance in recent years suggesting that many conventionally anonymised datasets do not meet the threshold required to be treated as truly anonymous under applicable law. Northhaven’s approach sidesteps this risk entirely by generating data that was never derived from real records to begin with.

Enterprise Deployment: From Proof of Concept to Production

The deployment of synthetic telecom data at enterprise scale follows a structured path that Northhaven has refined across engagements in financial services, MedTech, and — now — telecommunications. The process is designed to be scalable from a targeted proof of concept to a full production data environment, with no changes to the underlying methodology.

End-to-End Integration with Existing Telco Infrastructure

Northhaven synthetic telecom datasets are designed for end-to-end integration with the data infrastructure that enterprise telcos already operate. Our datasets are delivered in formats compatible with all major data platforms — Snowflake, Databricks, BigQuery, Redshift, and on-premise Hadoop environments — and are accompanied by full technical documentation, schema specifications, and data dictionaries that enable immediate use by data science and engineering teams.

The workflow for integrating Northhaven synthetic data into an existing telco AI pipeline typically requires no architectural changes. The synthetic dataset is a drop-in replacement for the real data that the pipeline would otherwise require — with the same schema, the same column names, the same data types, and the same statistical properties. The only difference is that it contains no real subscriber records.

For telcos operating in multi-cloud or hybrid environments, Northhaven datasets can be partitioned and replicated across cloud providers without the data sovereignty restrictions that apply to real subscriber records. This enables truly global AI development teams to work from the same dataset simultaneously — eliminating the data localisation bottlenecks that prevent enterprise AI teams from collaborating across jurisdictions.

Technical documentation — full data dictionary, schema specification, and generation methodology.

Fidelity report — statistical comparison between synthetic and reference data across all key metrics.

Compliance certificate — formal documentation that the dataset contains no personal data under GDPR, CCPA, and applicable telecom regulations.

Integration guide — step-by-step instructions for loading the dataset into Snowflake, Databricks, BigQuery, or any other target platform.

Model training baseline — suggested train/test/validation splits and performance benchmarks for the most common telecom ML use cases.

From Data to Actionable Insights: The Northhaven Telecom Data Advantage

The ultimate measure of any data initiative is not the sophistication of the technology — it is the quality of the actionable insights it enables and the business outcomes those insights drive. Northhaven’s synthetic telecom data creates value by accelerating the path from raw data to deployable AI models — compressing a timeline that typically takes 12–24 months into a 4–8 week engagement.

The modernization of a telco’s data and AI stack is not a single project — it is a continuous journey. Each use case that is successfully deployed creates new capabilities, new data pipelines, and new opportunities for AI innovation. Northhaven’s approach is designed to accelerate this journey at every stage: from the initial PoC that proves the business case, through the first production deployment, to the ongoing expansion of the synthetic data environment as new use cases are identified.

New Business Models Enabled by Synthetic Telecom Data

Beyond optimising existing operations, synthetic telecom data enables entirely new business models that were previously impossible due to data sharing constraints. Consider the following scenarios that Northhaven synthetic data makes commercially viable for the first time:

The Future of Telecom Data: 5G, AI, and the Connected Ecosystem

The transition to 5G network infrastructure is not just a capacity upgrade — it is a fundamental change in what telecom data looks like and what it enables. 5G networks generate dramatically more granular telemetry than their predecessors: millisecond-level latency measurements, per-slice quality metrics, device-level signal quality reporting, and massive IoT device connectivity events. This data explosion is simultaneously the biggest opportunity and the biggest challenge in the history of telecom data management.

The ecosystem that 5G enables — smart cities, autonomous vehicles, industrial IoT, remote surgery — generates new categories of data that did not exist under 4G. Network slicing creates virtualised network instances for specific use cases, each generating its own telemetry stream. Edge computing nodes process data locally before it reaches the core network. These architectural changes require new approaches to data collection, storage, and analytics — and create new demands for training data for the AI systems that will manage this complexity.

Northhaven is developing synthetic data generation capabilities specifically designed for the 5G and beyond-5G data environment — including network slicing telemetry, edge compute performance data, and the massive IoT device event streams that 5G connectivity enables. Our goal is to ensure that as the telecom data landscape evolves, the synthetic data capabilities that enable AI development evolve with it — keeping pace with the innovation cycle rather than lagging behind it.

The Role of Synthetic Data in Telecom Modernization

As telcos execute on their modernization roadmaps — migrating from monolithic BSS/OSS stacks to cloud-native, microservices-based architectures — the role of synthetic data as an enabler of operational efficiency becomes even more critical. Modernisation projects require testing new systems against realistic data at scale — and the data available for testing is almost never the real production data, which cannot be exposed to development and staging environments.

Synthetic data solves this problem elegantly: test environments can be populated with synthetic subscriber records, synthetic call detail records, synthetic billing data, and synthetic network events that are statistically equivalent to production data — enabling realistic load testing, integration testing, and AI model validation without any of the regulatory risk associated with using real data in non-production environments.

Getting Started with Northhaven Synthetic Telecom Data

Northhaven Analytics offers a structured engagement process designed for enterprise telecom clients. Every engagement begins with a free technical scoping call and a mutual NDA — ensuring that the confidentiality of your data architecture, business requirements, and technical specifications is protected from the first conversation.

The typical engagement for a first synthetic telecom dataset follows a three-step process: schema discovery and specification, proof-of-concept dataset generation, and full-scale production dataset delivery. The proof-of-concept phase — in which we generate a sample synthetic dataset that demonstrates the statistical fidelity and schema compatibility of our output — is typically completed within one to two weeks and is provided at no cost as part of the engagement process.

Request a Free Synthetic Telecom Dataset PoC

Tell us about your use case — churn prediction, network anomaly detection, fraud modelling, 5G planning, or any other telecom AI application — and we will generate a sample synthetic dataset that demonstrates what Northhaven can produce for your specific data environment. No real data required from your side. NDA from day one. Delivered in weeks, not months.

| Telecom Data Type | Use Case | Generation Time | Scale |

|---|---|---|---|

| Subscriber CDR (Call Detail Records) | Churn prediction, fraud detection, personalisation | 1–2 weeks | Unlimited |

| Network KPI Telemetry | Network optimisation, predictive maintenance, autonomous ops | 1–2 weeks | Unlimited |

| Subscriber Billing Records | Revenue assurance, pricing analytics, fraud detection | 1–2 weeks | Unlimited |

| 5G Slice Performance Data | 5G planning, SLA management, slice optimisation | 2–3 weeks | Enterprise |

| IoT Device Event Streams | Smart city analytics, industrial IoT, autonomous vehicle data | 2–3 weeks | Enterprise |

| Security / Fraud Event Logs | Fraud model training, cybersecurity red-teaming, threat detection | 1–2 weeks | Unlimited |

| Customer Experience Surveys + Network Join | Personalisation, NPS prediction, proactive customer care | 2–3 weeks | Enterprise |

Ready to unlock your

telecom data potential?

Book a free technical consultation. We’ll scope your use case and deliver a proof-of-concept synthetic telecom dataset — NDA from day one, no real subscriber data required.