Northhaven Analytics was founded on a shared frustration. Specifically, quantitative researchers faced a limit. It was not computational power. Instead, it was access to data. Both founders saw the same pattern. For instance, ML engineers had tools but no access. Similarly, quants needed behavioural depth. However, databases delivered noise.

Consequently, Northhaven was born. We designed an engine to solve this. Ultimately, we address the structural data scarcity problem. (Meet the Founders behind this vision).

Why Northhaven rejected the “general-purpose AI” paradigm

Unfortunately, most vendors make a wrong assumption. They build generic models. Then, they hope for realistic output. However, the team at Northhaven understood early that this fails. Specifically, it fails because of financial structure.

Finance is not a collection of disconnected tables. Instead, it is a network. It involves probability distributions. Moreover, it includes conditional dependencies. Therefore, a general-purpose model cannot capture this.

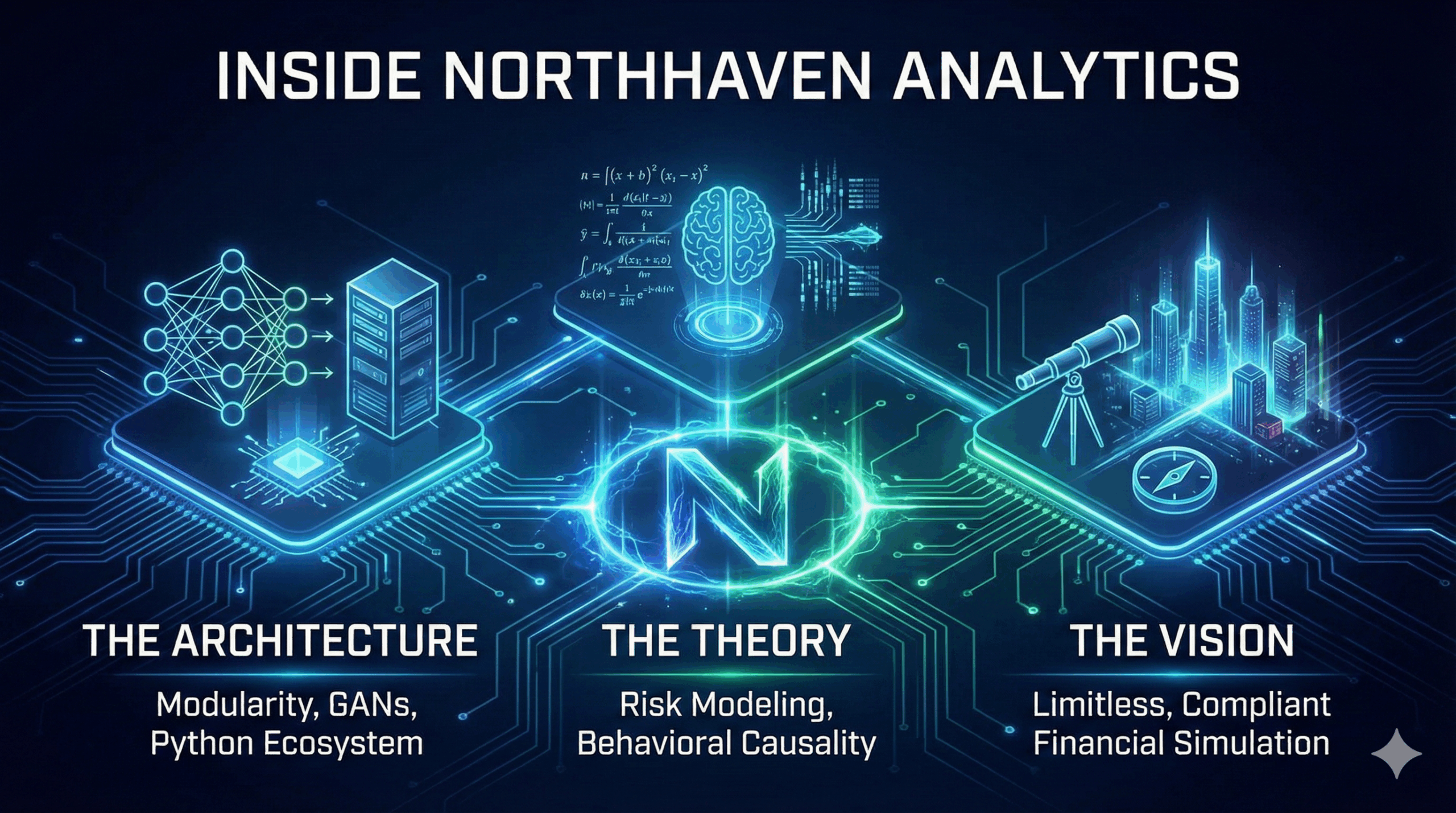

Consequently, Northhaven constructed its architecture from the ground up. We engineered it specifically for financial behaviour. As a result, our approach to synthetic financial data generation is unique. It does not merely imitate data. Rather, it reconstructs the mechanisms.

Building generative models that understand financial behaviour

The generative engine at Northhaven is complex. Technically, it combines multiple components. First, the CTGAN-derived core handles mixed variables. Next, convolutional layers extract temporal patterns. For example, they identify transaction histories. (Read about our Synthetic Banking Datasets Engine).

This is not standard computer vision. Instead, it is a financial adaptation. It identifies long-horizon cycles. For instance, credit usage and repayment arcs.

Crucially, the discriminator plays a different role. Unlike typical GANs, it evaluates logic. Specifically, it penalizes outputs that violate risk progression. Therefore, the model learns how finance behaves. Ultimately, this distinction is the foundation of accuracy.

Synthetic financial data as a solution to the non-stationarity paradox

In quantitative finance, the greatest challenge is non-stationarity. Indeed, markets shift. Furthermore, macro-regimes evolve. Consequently, consumer behaviour adapts. However, real data represents only the past.

Therefore, Northhaven’s methodology views this as an opportunity. By reconstructing dependencies, we enable flexibility. Specifically, synthetic financial data generation produces datasets that reflect logic. Crucially, they are not trapped in one regime.

This is why synthetic datasets often outperform real ones. They are not distorted by historical accidents. Instead, they embed fundamental relationships. (Read more in Why Synthetic Financial Data Will Replace Real Data).

A new modelling landscape: from compliance-driven constraints to unlimited experimentation

One of the most transformative effects is the workflow shift. For example, a team used to waiting weeks can now generate data instantly. Consequently, iteration speeds up. Moreover, model testing becomes unconstrained.

Synthetic financial data generation becomes the primary engine. It is not a fallback. Rather, researchers can scale their ambitions. They can match the capabilities of modern machine learning. (See our AI Risk Modelling Datasets).

Regulatory Neutrality as a Competitive Advantage

Privacy was historically a barrier. However, synthetic data changes that equation. Specifically, Northhaven’s architecture ensures zero recoverability. There is zero personal information.

Therefore, institutions can grant full access. This means accessing behavioural structures without legal risk. For the first time, teams can collaborate freely. Consequently, this regulatory neutrality creates an advantage. Organisations simply move faster. (See our Data Validation and Advisory).

How Northhaven is shaping the future of financial AI

Northhaven Analytics is not positioning itself as another software vendor. Its mission is to establish synthetic data as the new baseline infrastructure for quantitative finance. By developing a proprietary generative engine and an integrated Python library with automated model versioning and Git-based dataset control, the company is creating an ecosystem rather than a tool. This ecosystem evolves with every synthetic generation, incorporating new financial behaviours, new structural dependencies and new domain-specific learning signals. The long-term vision is not to replicate the limitations of real data, but to surpass them — enabling institutions to train models on financial universes that are richer, cleaner and more complete than anything captured in the historical record.

Conclusion: Northhaven and the next era of financial modelling

The transition from real data to synthetic data will define the next decade of quantitative research. It is not a stylistic change, but a structural one — a redefinition of how financial systems are modelled and understood. Northhaven Analytics stands at the core of this transformation by building an engine that captures the true mechanics of financial behaviour. As synthetic financial data generation becomes the new global standard, institutions that adopt it early will operate in a modelling landscape where data is no longer a constraint but an infinitely scalable resource. Northhaven’s work shows that the future of finance will not be built on historical datasets, but on generative architectures capable of expressing every possible financial reality.