Financial institutions rely heavily on credit bureau data. However, costs are high. Moreover, data use restrictions limit analytical depth. In addition, compliance risks are a constant burden.

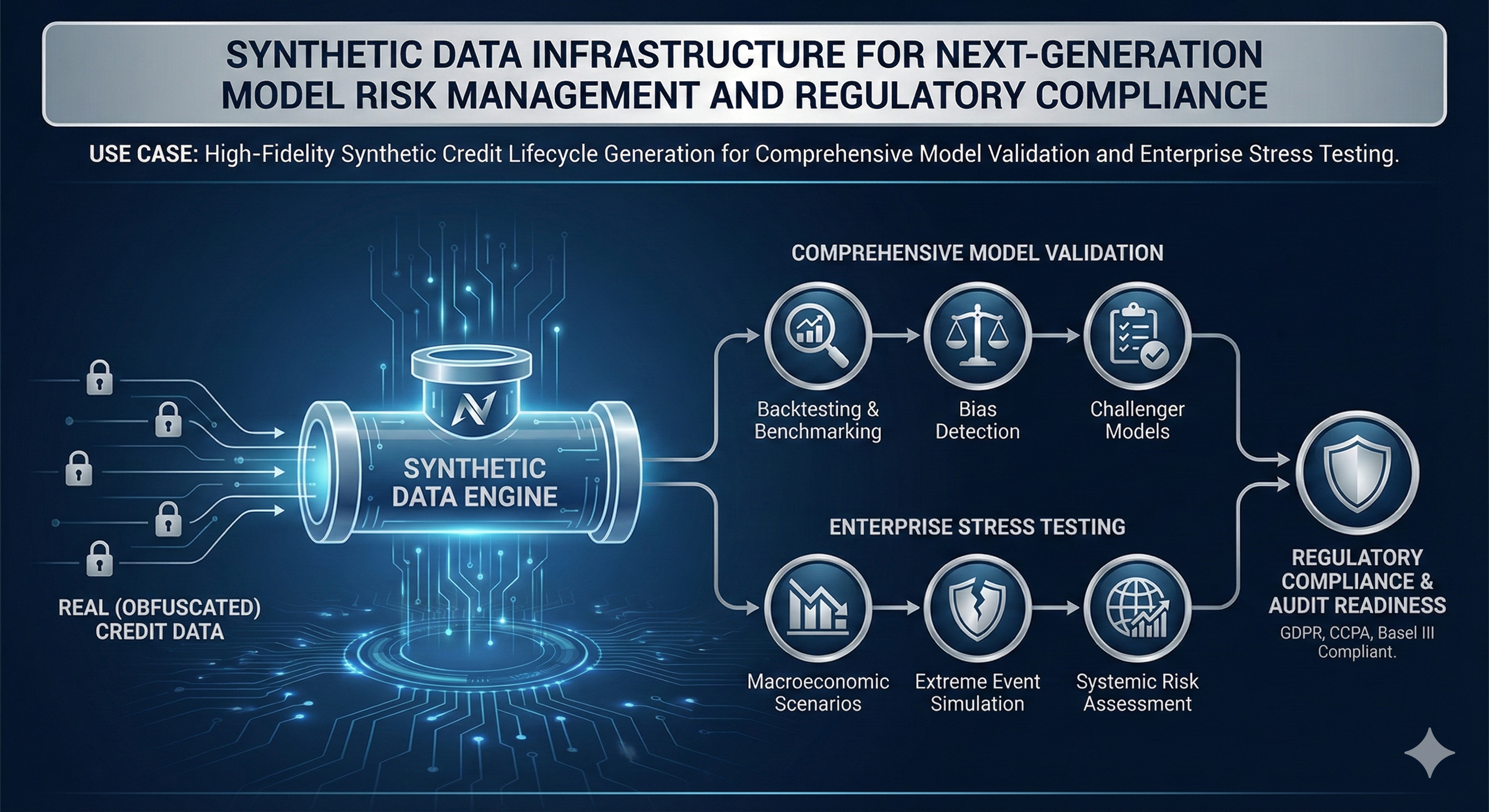

Therefore, Northhaven Analytics delivers a solution. Specifically, we provide a custom Machine Learning (ML) engine. It generates statistically identical synthetic credit scoring datasets. In fact, these replicate complex behavioral sequences. Furthermore, they preserve account correlations found in real files.

Consequently, this enables banks and fintechs to train models. Specifically, they can validate granular scoring models at scale. Ultimately, this eliminates production data dependencies. And it ensures full GDPR compliance.

Industry Context & Pain Points

Credit risk teams face significant obstacles. Specifically, operational friction is high. This occurs when accessing comprehensive bureau data.

Cost Prohibitions for Granular Data

Accessing deep historical data is expensive. For instance, 60-month payment tapes cost a fortune. This applies on a per-query basis. Consequently, this prevents the use of large datasets. Therefore, robust training of deep learning models becomes impossible.

Regulatory and Compliance Restrictions

Regulatory mandates are strict. For example, GDPR limits PII usage. Therefore, sharing granular history is difficult. This is true for third-party vendors. Also, it applies internally between departments. Consequently, legal sign-offs take months. Data masking degrades utility. Ultimately, this slows model iteration.

Scarcity of Edge Cases

Modelers struggle to find specific events. For instance, consumers with thin files. Or, specific multi-loan default sequences. However, these are high-impact events. Therefore, they are crucial for refining credit loss forecasting.

How Northhaven Solves It: The Synthetic Credit Engine

The requirement is clear. Essentially, we must decouple analytical insights from PII. Therefore, synthetic data achieves this. It trains a generative model on the statistical footprint. Consequently, it creates new, artificial records. Crucially, they remain statistically accurate.

This approach provides a safe alternative. In fact, it is legally safe and scalable. Moreover, it requires no PII transfer. Thus, researchers can perform full-history lookups. They can also run large-scale simulations. (Read about our Financial Data Simulation Tools).

Ultimately, this transforms development. It shifts from a bottleneck to a rapid workflow.

Northhaven Analytics addresses this challenge. Specifically, we deploy a modular synthetic data engine. It is customized to your internal definitions.

Core Generative Architecture

The engine is advanced. Specifically, it uses a CTGAN-based architecture. Moreover, it utilizes a sophisticated Discriminator. This is crucial for two reasons:

- Correlation Preservation: It excels at learning dependencies. For example, the link between credit mix and delinquency. This is essential for accurate risk scoring.

- High-Fidelity Sequences: The model learns temporal dynamics. Consequently, it synthesizes realistic month-by-month histories. (See our Synthetic Banking Datasets Engine).

Modular and Scalable Deployment

The system is a Python library. Therefore, it provides enterprise-grade controls.

- Model Module: This handles training logic. Users can generate millions of histories instantly.

- Data Manager Module: This ensures consistent structuring. Specifically, fields adhere to bureau schemas. Therefore, integration is immediate.

- Git Controller Module: This provides versioning. It commits the trained model to a repository. Consequently, this ensures full auditability.

Financial Logic Constraints

Northhaven embeds specific rules. For instance, underwriting and fraud rules. These acts as soft constraints. Furthermore, the continuous-learning capability helps. It allows periodic retraining. Therefore, the data does not suffer from drift. (Learn about our Data Validation and Advisory).

Technical Architecture

The Northhaven SDG is engineered for high-fidelity replication of complex, multi-dimensional financial datasets while prioritizing privacy.

Generative Engine:

The core generative engine utilizes advanced neural network architectures, primarily CTGAN derivatives for static portfolio characteristics and Temporal Convolutional Networks (T-CNNs) or Transformer Discriminators for modeling time-series and behavioral sequences (e.g., 24 months of transaction history). The use of conditional generation allows the bank to explicitly guide the data generation to focus on specific cohorts or rare-event scenarios (e.g., generating 10 million synthetic records exhibiting a simultaneous 20 percent drop in income and a 15 percent increase in debt-to-income).

Data Fidelity Metrics:

Fidelity is measured through a continuous, auditable process focused on preserving statistical properties:

- Univariate Distribution Matching: Verifying that the distribution of individual features (e.g., age, balance) is preserved using statistical tests like Kolmogorov-Smirnov.

- Correlation Retention: Ensuring that the pairwise and multivariate correlation structures between features (crucial for risk modeling) are accurately replicated, quantified by Synthetic-to-Real Correlation Metrics.

- Temporal Fidelity: For time-series data, assessing the preservation of sequence dependencies and autocorrelation functions.

Privacy Guarantees and Leakage Assessment:

Privacy protection is certified through explicit technical controls:

- Differential Privacy (DP): DP mechanisms are embedded during the training phase, ensuring that the influence of any single statistical input in the final generator model is bounded, thereby protecting against reconstruction attacks.

- Leakage Evaluation: Auditable Membership Inference Protection and Nearest Neighbor Distance tests are executed to provide quantitative proof that specific real records cannot be identified or closely reconstructed from the synthetic output.

Drift Evaluation:

The system includes continuous Drift Evaluation features, allowing the client to monitor the long-term statistical stability of the synthetic data against the production data. This ensures that the synthetic book remains relevant and does not introduce synthetic bias as the real portfolio evolves.

Implementation Steps

The deployment is structured as a governance-aligned, multi-phase engagement designed for seamless integration within a regulated environment.

| Step | Description | Duration |

| 6.1 Ingestion of Schema and Business Rules | Secure ingestion of the client’s data dictionary, schema definitions, and a formalized list of critical Financial Logic and Hard Constraints. | 2 Weeks |

| 6.2 Model Design and Architecture Tailoring | Configuration of the base generative architecture (e.g., CTGAN + T-CNN) and integration of client-specific logic modules into the loss function. | 1 Week |

| 6.3 Iterative Training and Calibration | Training the ML generator on statistical aggregates. This phase involves iterative tuning and calibration to achieve optimal Distribution Alignment and Correlation Retention. | 4 Weeks |

| 6.4 Fidelity and Privacy Testing | Rigorous execution of all fidelity metrics (KL, Wasserstein) and Leakage Assessments. Generation of comprehensive Audit and Certification Reports. | 2 Weeks |

| 6.5 Synthetic Dataset Deployment | Deployment of the trained generator model (Artefact) to the client’s designated Sandbox Environment or secure API endpoint. | 1 Week |

| 6.6 Internal Validation and Benchmarking | Client Model Validation teams use the generated synthetic data for stress testing, model challenger comparison, and regulatory scenario coverage expansion. | Ongoing |

Business Impact

The implementation of the Northhaven SDG drives material business impact across risk management, compliance, and innovation velocity.

- Regulatory Compliance and Model Risk Reduction: Provides statistically robust, auditable data for validating complex ML models, directly addressing the scrutiny of SR 11-7 and EBA/ECB expectations, thereby lowering capital add-ons associated with Model Uncertainty (MU).

- Accelerating AI Adoption: Reduces the time spent on data preparation and provisioning from months to minutes, significantly increasing the velocity at which new AI models can be trained, tested, and deployed.

- Enabling Experimentation: Allows data scientists to securely experiment with new model architectures (e.g., deep learning) that require massive datasets, without the high costs and risk associated with production data access.

- Eliminating Privacy Barriers: Enables external collaboration and vendor validation using certified, privacy-safe data, eliminating legal and compliance bottlenecks that previously stalled projects.

- Enabling Cross-Departmental Collaboration: Provides a single, statistically identical dataset that can be shared between Development, Validation, and Internal Audit teams, standardizing the benchmarking environment and improving organizational efficiency.

KPIs & Measurable Outcomes

The success of the Northhaven solution is measured by quantifiable improvements in data quality and operational efficiency.

| KPI | Target Metric | Description |

| Statistical Fidelity Score | Greater than 90 percent | Composite score measuring marginal and conditional distribution matching versus real data. |

| Model Validation Time Saved | 70 percent reduction | Decrease in the time required to complete a comprehensive model validation cycle due to instant data access. |

| Rare Event Coverage | 100 percent of required tail scenarios | Ability to generate sufficient data volume for every specified low-frequency, high-severity scenario. |

| Drift Reduction | Less than 5 percent annualized drift | Maintaining the statistical relevance of the synthetic data over time compared to production data. |

| Synthetic-to-Real Correlation Retention | Greater than 0.98 for critical feature pairs | Quantified measure of preserving the relationship between key financial variables. |

| External Sharing Compliance Cost | Near elimination of PII compliance burden | Reduction in legal/governance hours spent clearing data for third-party use. |

Conclusion

The increasing complexity of financial ML models and the permanence of global data privacy regulations confirm that synthetic data is not an optional tool, but an essential component of modern financial AI infrastructure. By delivering custom-trained ML generators, Northhaven Analytics provides financial institutions with a scalable, privacy-safe, and regulator-friendly mechanism for creating statistically accurate representations of their entire credit lifecycle. This capability is critical for supporting defensible model governance, driving operational efficiency, and enabling the robust, full-scale validation necessary to deploy next-generation AI systems safely and compliantly into production environments.