By Northhaven Analytics Security Team

Introduction: Why Data Leakage is the Silent Killer of AI Models and Data Security

In the high-stakes world of artificial intelligence, predictive models are only as robust as the training data they consume. However, a silent destroyer often lurks within the complex data pipeline: data leakage. Unlike a catastrophic data breach where confidential data is stolen by malicious hackers, data leakage in machine learning is a structural methodology failure where unseen data accidentally infiltrates the model’s training process.

Data leakage occurs when information from the test set or future data leaks into the training set, allowing the machine learning model to inadvertently „cheat” during training. This phenomenon leads to overly optimistic performance metrics—such as 99% accuracy—that inevitably collapse when the model is deployed to face real-world data.

For data scientists and ML engineers, understanding how to detect and prevent data leakage is not merely about preserving model accuracy; it is a critical data security issue. Data leakage increases the risk of deploying flawed systems that can expose sensitive data or lead to erroneous, bias-filled decision-making in sectors like finance and healthcare.

In this definitive, deep-dive guide, we will explore the types of data leakage, identify the common causes of data leakage, and provide a comprehensive framework of data leakage prevention best practices to ensure your AI training data remains robust, secure, and truly predictive.

What is Data Leakage? Defining the Problem for Machine Learning

Data leakage (often referred to simply as a leak) happens when a machine learning algorithm utilizes information during the training phase that it would not have access to in a real-world production environment. Essentially, the data during training contains „spoilers” or proxies for the target variable that reveal the answer.

Data leakage occurs when sensitive or highly predictive features that are correlated with the outcome—but are not causal—are erroneously included in the training data. This results in a model that memorizes the answer key rather than learning the underlying pattern.

Data Leakage vs. Data Breach: The Critical Distinction

It is vital for organizations to distinguish between a data leak vs a data breach, as they require different remediation strategies.

- Data Breach: A cybersecurity incident where sensitive data is exposed to unauthorized parties. Data breaches occur due to hacking, phishing, or poor data protection. This involves the theft of personal data or social security numbers.

- Data Leakage: A methodological error in data science where information leakage distorts the model’s learning process. It is an internal data science failure, not necessarily an external hack.

However, data leakage also occurs in the context of privacy. If a model overfits due to leakage, it might memorize personally identifiable information (PII), leading to a privacy data leak. Therefore, preventing data leakage is essential for both ensuring high-performance models and preventing data privacy violations under regulations like GDPR.

The Anatomy of a Leak: Main Types of Data Leakage

To effectively prevent data leakage, we must first identify its specific form. There are two primary type of data leakage: Target Leakage and Train-Test Contamination.

1. Target Leakage (The „Future Data” Problem)

Target leakage is the most insidious form. It occurs when the training data includes features that are generated after the target variable is determined. This puts future data into the past, creating a temporal paradox in the dataset.

- Example Scenario: In a customer churn prediction model, including a feature like „customer service cancellation call duration” would lead to data leakage. This event happens after the decision to churn has effectively been made.

- The Result: The machine learning model learns that „long calls = churn” but cannot use this in real-time prediction because the call hasn’t happened yet at the moment of prediction.

2. Train-Test Contamination and Unseen Data

This leakage in machine learning occurs when data splitting is performed incorrectly or sloppily. If information from the test set bleeds into the training set during data preprocessing, the model is essentially graded on exam questions it has already studied.

- Common Cause: Applying global normalization or data transformations (like Min-Max scaling or Z-score standardization) to the entire dataset before splitting it into train and test sets.

- The Mechanism: This allows the model to learn the distribution (mean and variance) of the unseen data, causing statistical leakage in data distributions.

Common Causes of Data Leakage in Machine Learning Pipelines

Why does data leakage occur so frequently? It is rarely intentional. The causes of data leakage are often subtle errors buried deep in the data pipeline code.

1. Improper Data Splitting and Data Access

Failure to isolate the test set completely is the leading cause. Data scientists must ensure that the split happens before any exploration or imputation. Unauthorized access to the data test set during the exploratory phase can unconsciously bias feature selection.

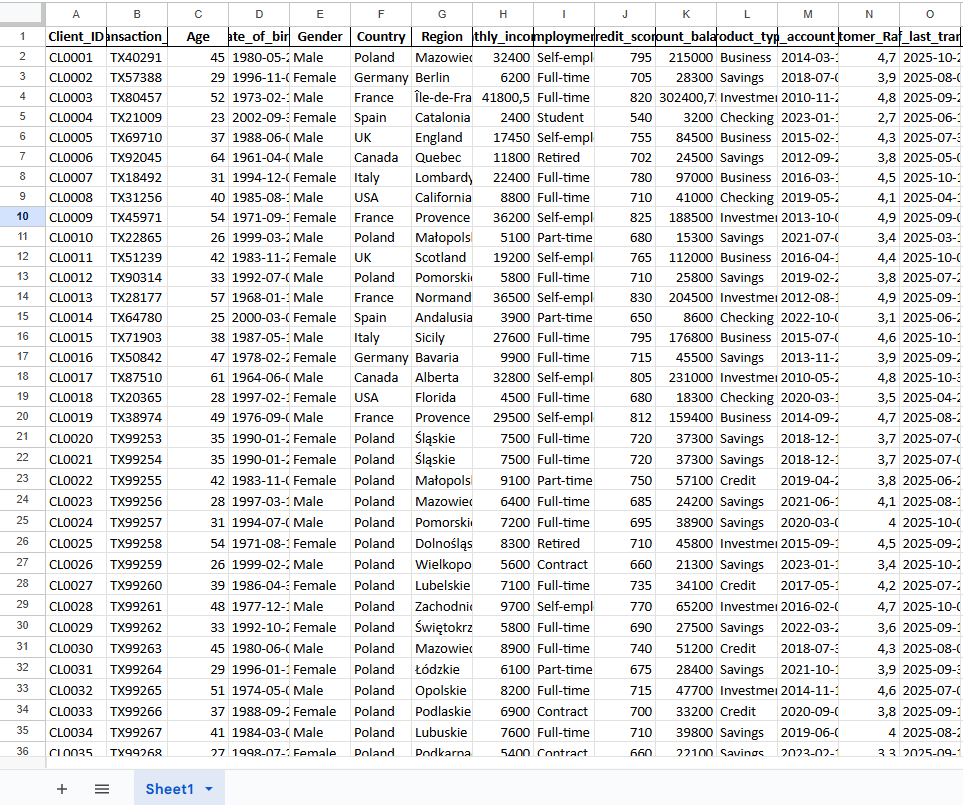

2. Leaky Features and Proxies

Including IDs, transaction timestamps, or row numbers that act as proxies for the target variable can lead to data leakage. For example, if account numbers are assigned sequentially, a higher number might correlate with a newer (and thus active) user, leaking tenure information.

3. Data Preprocessing Errors and Transformations

Imputing missing values using the mean of the entire dataset instead of just the training set is a classic error. This introduces information about the unseen data into the training environment. Any data transformations must be fit only on training data points.

4. Temporal Leakage in Time Series

In time-series data, random splitting (K-Fold Cross-Validation) instead of time-based splitting is fatal. It allows the model to train on future data to predict the past. You must always split chronologically to prevent leakage.

These errors lead to data leakage, creating a false sense of security. Data scientists must rigorously monitor data access and transformations to avoid these pitfalls.

The Security Risk: When Leakage Exposes Sensitive Data

Beyond model performance, data leakage poses a severe security risk. In regulated industries like banking and healthcare, sensitive data across systems must be handled with extreme care.

If a model is trained on sensitive training data without proper data sanitization or differential privacy, it can inadvertently expose sensitive data through model inversion attacks. Identifiable information or confidential data embedded in the model weights can be extracted by adversaries.

Data leakage occurs when sensitive data is not treated with robust data protection measures like data encryption or tokenization. Handling sensitive data requires strict data governance policies. Failure to protect sensitive data can result in a data breach compliance nightmare under GDPR, CCPA, or HIPAA.

How to Detect Data Leakage in Your Models

Data leakage detection and prevention starts with vigilance and forensic analysis of model results. High performance is often the first red flag.

1. The „Too Good To Be True” Metric

If your model achieves 99% accuracy or an AUC of 1.0 immediately, it likely indicates data leakage. Real-world problems rarely allow for perfect predictions. Such metrics often indicate data leakage.

2. Feature Importance Analysis

Run feature importance charts (e.g., SHAP values). If one feature has an absurdly high weight compared to others, check for target leakage. That feature is likely a proxy for the label.

3. Exploratory Data Analysis (EDA)

Visualize correlations between features and the target. Data points that correlate 100% with the target are suspicious. Leakage in data manifests as a perfect correlation.

Data leakage requires auditing the data sources and understanding the timeline of data generation.

Comprehensive Data Leakage Prevention Best Practices

To prevent data leakage, organizations must implement a robust data leakage prevention policy integrated into their MLOps lifecycle.

1. Robust Data Splitting Strategy

Always split data types before any processing. For time-series, use strictly time-based splitting to ensure no future data enters the training set. Maintain a „Holdout Set” that is never touched until the final evaluation.

2. Pipeline Isolation

Construct machine learning algorithms within pipelines (e.g., Scikit-Learn Pipelines) that ensure data preprocessing steps (scaling, imputing, encoding) are fit only on the training data and transform on the test data. This architecture mechanically prevents information leakage.

3. Feature Auditing and Selection

Scrutinize every column during feature engineering. Ask: „Would I have access to this data in real-world scenarios at the precise moment of prediction?” If the answer is no, remove it immediately to prevent leakage.

4. Remove Identifiers and PII

To protect data privacy, strip out personally identifiable information and personal data before training. Use data encryption or tokenization for data in transit and at rest.

5. Monitor External Data Sources

Be wary of external data sources. Merging external datasets without careful timestamp alignment is one of the common causes of data leakage. Ensure external data was available at the time of the prediction event.

Data Loss Prevention (DLP) and Governance Tools

Data leakage prevention (DLP) tools are essential infrastructure for the modern enterprise. Data loss prevention software can monitor data access, detect anomalies, and prevent unauthorized data handling.

Data governance ensures that sensitive information is categorized and tagged correctly. Data governance policies should dictate who can use data, how data is used, and where sensitive data is exposed.

Effective data leakage prevention tools scan for social security numbers, credit card numbers, or API keys in code repositories (like GitHub) and datasets, ensuring data security standards are met before modeling begins. This helps prevent information leakage at the source.

The Impact of Data Leakage on AI Systems

The impact of data leakage is devastating and multidimensional.

- Deployment Failure: The model fails spectacularly in production because it relied on leak data or artifacts that simply aren’t available in real-time streams.

- Financial Loss: Incorrect predictions in high-frequency trading or automated lending due to target leakage can cost millions.

- Reputational Damage: A security risk materialized through a privacy data leak destroys customer trust and invites regulatory fines.

Data leakage requires immediate remediation. Preventing data contamination is infinitely cheaper than fixing a broken system post-deployment.

Real-World Data Leakage Examples

Understanding data leakage examples helps in identifying risks. In a Kaggle competition for predicting prostate cancer, many participants experienced leakage because the dataset included ID numbers that were sequentially assigned based on the severity of the cancer. The machine learning model learned that higher IDs meant higher severity, which was target leakage. In finance, data leakage also occurs when macroeconomic indicators that are released monthly are used to predict daily stock movements without accounting for the release lag.

Conclusion: Securing the Future of AI with Synthetic Data

Data leakage is the silent killer of AI integrity. Whether it is target leakage destroying model utility or a privacy leak exposing sensitive information, the consequences are severe. Data leakage increases the risk of project failure.

Data leakage prevention best practices involve a combination of rigorous data handling, strict data splitting, and increasingly, the use of synthetic data to minimize risk. By ensuring that training data is clean, isolated, and representative, data scientists can build models that truly generalize to unseen data.

Detect and prevent data leakage before it becomes a data breach. In the era of AI, data security and model integrity are one and the same.

Ready to secure your AI pipeline? Learn how Northhaven Analytics uses synthetic data to eliminate data leakage risks entirely.

👉 Explore Our Security Solutions