By Northhaven Analytics Engineering Team

Introduction: Why „Good Enough” is Dangerous

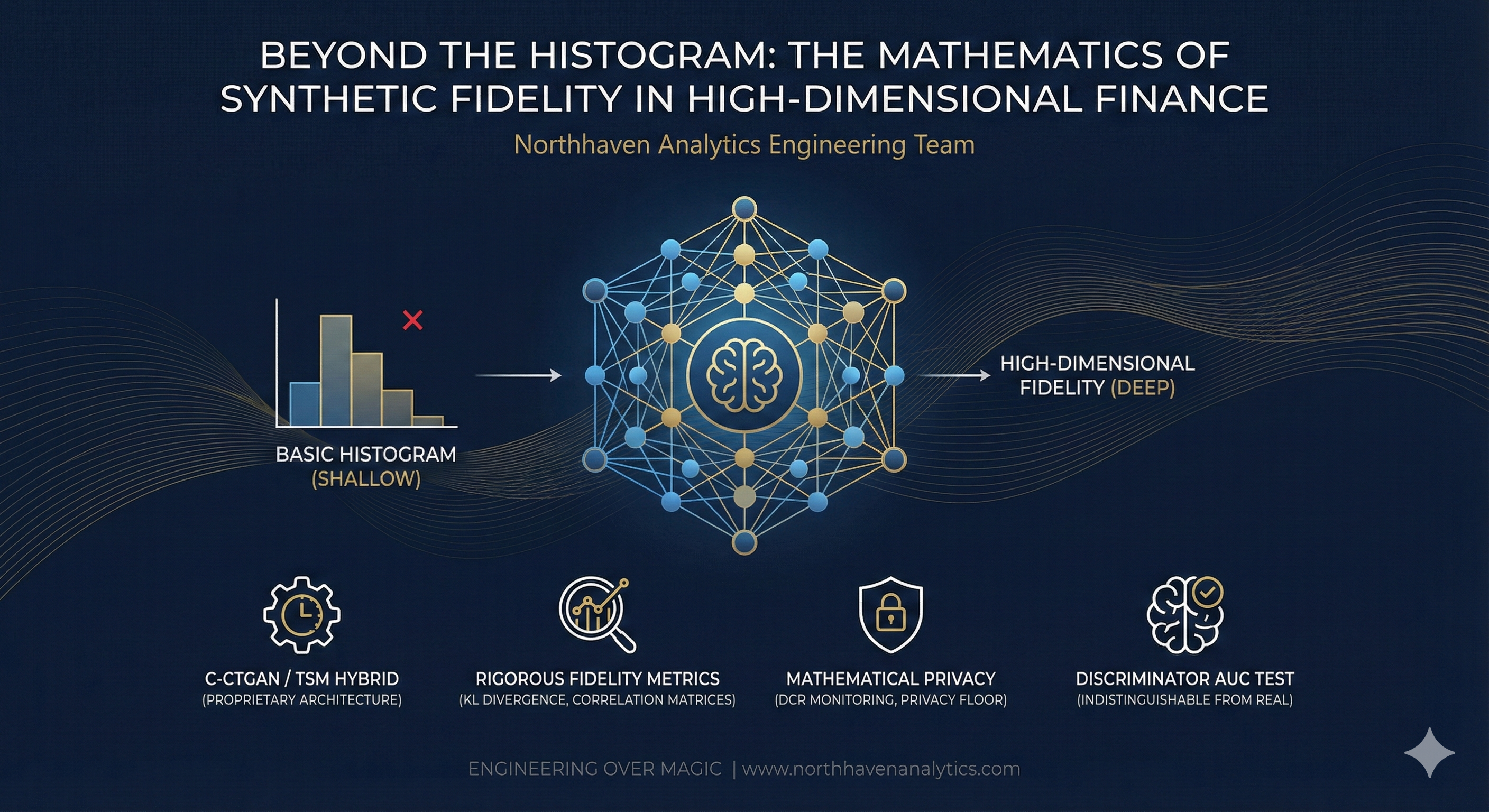

In the world of synthetic data, there is a dangerous misconception: if the histograms match, the data is good. This is a fallacy that leads to catastrophic model failure.

A simple random number generator can match a marginal distribution. If you need 10,000 ages with a mean of 35 and a standard deviation of 10, a basic script can do that in milliseconds. But financial data is not a collection of independent columns. It is a dense, entangled web of dependencies. Income drives credit limits. Credit limits constrain utilization. Utilization history predicts default probability.

If your synthetic data engine captures the columns but breaks the correlations, you haven’t created a „Digital Twin.” You’ve created digital noise. At Northhaven Analytics, we don’t settle for „good enough.” We engineer for High-Dimensional Fidelity. This technical deep dive explores the architecture required to preserve the mathematical soul of financial portfolios.

The Engineering Challenge: The „Curse of Dimensionality”

Financial datasets are notoriously high-dimensional. A typical credit risk table might have 50 features (columns) and a temporal depth of 60 months. The joint probability distribution of such a dataset is incredibly complex. Traditional synthesis methods (like Gaussian Copulas) often fail here because they assume linear or simplistic relationships. They collapse under the weight of non-linear dependencies.

Our Solution: The C-CTGAN / TSM Hybrid

We rejected off-the-shelf synthesizers in favor of a proprietary hybrid architecture: Conditional CTGAN (C-CTGAN) + Temporal Sequence Modeling (TSM).

1. The Generator (G) and the Mode Collapse Problem

Standard GANs suffer from „Mode Collapse”—where the generator finds one realistic output and keeps repeating it to fool the discriminator. In finance, this is fatal. It means losing the „tail risk” customers—the rare defaults that matter most.

We engineered our Generator with PacGAN-style packing, where the discriminator evaluates multiple synthetic samples at once to detect lack of diversity. This forces the Generator to cover the entire manifold of the real data, not just the easy center.

2. Temporal Convolutions for Time-Series

Static tabular data is easy. Time-series data is hard. A customer’s bank balance is not a random variable; it is a sequence with autocorrelation. We utilize Temporal Convolutional Networks (T-CNNs) within the generator architecture. Unlike standard RNNs (Recurrent Neural Networks), T-CNNs allow for parallel processing and better capture long-range dependencies (e.g., annual seasonality in spending). This ensures that a synthetic customer’s financial trajectory follows a logical, causal path over 60 months.

Quantifying Reality: Our Fidelity Metrics

We do not ask you to „trust us.” We prove fidelity with rigorous math. Every Northhaven artifact passes a gauntlet of statistical tests before deployment.

1. Kullback-Leibler (KL) Divergence

We measure the entropic distance between the real (P) and synthetic (Q) distributions for every single feature.

KL Divergence(P || Q) = Sum of P(x) * log(P(x) / Q(x))

A low KL divergence score confirms that we haven’t just memorized the data, but learned the underlying probability function.

2. Pairwise Correlation Matrices

We compute the Pearson and Spearman correlation matrices for both real and synthetic datasets. We then calculate the L2 Norm of the difference between these matrices. This single metric tells us if the „web of dependencies” remains intact. If income and debt-to-income ratio are negatively correlated in reality, they must be negatively correlated in the synthesis.

3. The „Discriminator AUC” Test

The ultimate test. We train a fresh XGBoost classifier to distinguish between your real data and our synthetic data. If the classifier’s ROC-AUC score is close to 0.5 (random guessing), it means the synthetic data is mathematically indistinguishable from the real thing.

The Compliance Angle: Mathematical Privacy

High fidelity must not come at the cost of privacy. This is where the Distance to Closest Record (DCR) metric comes in. During training, we monitor the distance between generated synthetic vectors and the nearest real data vectors. We enforce a Privacy Floor: no synthetic record is allowed to be „too close” to a real record in high-dimensional space. This prevents „overfitting” and guarantees that the model generates new individuals from the learned distribution, rather than regurgitating real ones.

Conclusion: Engineering Over Magic

There is no magic in synthetic data. There is only rigorous probability theory, advanced deep learning architecture, and relentless validation. Financial institutions cannot build billion-dollar risk models on „mock data.” They need mathematically rigorous synthetic artifacts. That is what Northhaven builds. We don’t just mimic the data; we master the distribution.

Ready to stop approximating and start engineering? Dive deeper into our technical documentation at www.northhavenanalytics.com.