By Northhaven Analytics Data Science Team

Introduction: The Imbalanced Data Problem in Financial Machine Learning and Fraud Detection

In the complex domain of machine learning and data science, few challenges are as pervasive, persistent, and costly as the imbalanced data problem. This issue is particularly acute in the financial sector, where risk is hidden in the tails of the distribution. When building predictive models for critical tasks like fraud detection or credit risk scoring, data scientists inevitably encounter a skewed class distribution: millions of legitimate transactions (the majority class) versus a handful of fraudulent ones (the minority class).

An imbalanced dataset is not merely a statistical nuisance; it is a structural barrier to effective AI deployment. If a machine learning model is trained on imbalanced data, it often becomes heavily biased toward the majority class, simply predicting the majority class for every instance to maximize global accuracy while failing to detect the critical minority class cases. In the high-stakes world of finance, missing a fraudulent transaction (a false negative) is infinitely more costly than flagging a legitimate one (a false positive).

This comprehensive guide explores how to deal with imbalanced data, analyzes the specific challenges of imbalanced distributions in banking, and details the advanced machine learning techniques used to handle imbalanced datasets. We will delve into resampling techniques like oversampling and undersampling, explore cost-sensitive learning, and discuss how synthetic minority over-sampling technique (SMOTE) and modern Generative AI are redefining how we balance datasets for optimal performance.

Understanding Class Imbalance: The Dynamics of Majority Class vs. Minority Class

Class imbalance refers to a scenario where the number of instances of one class far exceeds the other. In a typical binary classification problem found in finance, we deal with:

- The Majority Class (Negative Class): The dominant class. In banking, these are normal, non-fraudulent transactions which constitute the bulk of the data.

- The Minority Class (Positive Class): The target of interest. These are fraudulent transactions, loan defaults, or rare diseases in insurance. This class is severely underrepresented in the dataset.

The Scale of the Problem: Dealing with Highly Imbalanced Datasets in Finance

In financial fraud, we often deal with highly imbalanced datasets where the ratio can be as extreme as 1:10,000. Standard learning algorithms struggle here because they generally treat all misclassification errors equally. However, the cost of misclassifications in finance is asymmetric. Failing to detect financial fraud creates direct monetary loss and reputational damage.

Imbalance can lead to models that exhibit severe underfitting regarding the minority class while overfitting the majority class. To build robust machine learning models, we must move beyond standard accuracy and focus on addressing class imbalance at the algorithmic and data level.

Why Standard Machine Learning Models Fail on Imbalanced Data

When a standard classifier (like Logistic Regression or a basic Decision Tree) is fed an imbalanced dataset, the learning algorithm aims to minimize global error.

- The Accuracy Trap: If 99.9% of transactions are legit, a model that predicts „legit” 100% of the time has 99.9% accuracy but 0% utility. It fails to detect a single fraud.

- Bias: The decision boundary becomes biased toward the majority class, pushing the minority class samples into the noise or treating them as outliers.

To improve model performance, we must stop relying on accuracy and start using performance metrics that respect the true class distribution, such as precision and recall, F1-Score, and AUC-PR.

Strategic Approaches to Handle Imbalanced Datasets: Resampling and Algorithms

There is no single way to handle imbalance. Data scientists must employ a combination of data balancing strategies, algorithmic adjustments, and hybrid approaches.

1. Resampling Techniques: Undersampling the Majority and Oversampling the Minority

Resampling involves modifying the training data to artificially balance the dataset. This is often the first step in dealing with imbalanced datasets.

Random Undersampling: Reducing the Dominant Class

This involves randomly removing class instances from the majority class until the dataset is balanced.

- Pros: Reduces computational load and training time.

- Cons: Risks discarding valuable information. In highly imbalanced scenarios, you might throw away 99% of your data, losing critical variance.

Random Oversampling: Duplicating the Positive Class

This involves randomly duplicating existing minority class samples to match the size of the majority class.

- Pros: No information loss; keeps all data.

- Cons: High risk of overfitting. The model memorizes specific data points rather than learning the general decision boundary.

2. Synthetic Minority Over-sampling Technique (SMOTE) and Advanced Variations

Synthetic minority oversampling (SMOTE) is a more sophisticated form of over-sampling. Instead of simply duplicating records, it creates synthetic minority samples by interpolating between existing positive class instances in the feature space.

- How it works: It selects a minority class instance, finds its k-nearest neighbors, and generates a new point along the line segment joining them.

- Advanced Variants (Borderline-SMOTE, ADASYN): Techniques like ADASYN (Adaptive Synthetic Sampling) focus on generating data next to the decision boundary, where the classification is hardest. This helps the classifier define the border between fraud and non-fraud more clearly.

3. Cost-Sensitive Learning: Adjusting Class Weights for Imbalanced Data

Cost-sensitive learning modifies the learning algorithm itself. Instead of resampling the data, we assign a higher class weight to the minority class.

- Weights Inversely Proportional: We set weights inversely proportional to class frequencies. If fraud is 1% of the data, a misclassification of fraud is penalized 100 times more than a normal error during the gradient descent optimization.

- Implementation in Scikit-Learn: Libraries like Scikit-learn allow you to set

class_weight='balanced'in algorithms like Logistic Regression, SVM, or Random Forest. This forces the model to pay significantly more attention to the underrepresented in the dataset class.

Advanced Algorithms: Ensemble Methods and Deep Learning for Imbalance

Ensemble methods and Deep Learning architectures offer powerful ways to deal with imbalanced data beyond simple resampling.

Balanced Random Forest and Ensemble Classifiers

A Random Forest is an ensemble of decision trees. In a Balanced Random Forest, each tree is trained on a balanced bootstrap sample (using undersampling of the majority class). This allows the ensemble to learn from the broader majority class while ensuring each individual tree sees a balanced view. Combining multiple models helps reduce variance and bias simultaneously.

Boosting Algorithms (XGBoost, LightGBM, CatBoost)

Gradient boosting algorithms are inherently robust but can be fine-tuned. By adjusting parameters like scale_pos_weight, we can make the boosting process cost-sensitive, focusing the model’s attention on hard-to-classify minority class examples. These models excel at handling categorical features often found in transaction data.

Deep Learning Approaches: Autoencoders and Focal Loss

For complex, high-dimensional data, Deep Learning offers unique solutions:

- Autoencoders for Anomaly Detection: Instead of binary classification, we train an Autoencoder only on the majority class (legit transactions). When a fraudulent transaction is processed, the reconstruction error is high, flagging it as an anomaly.

- Focal Loss: A specialized loss function that down-weights easy examples and focuses training on hard negatives, effectively handling extreme imbalance during neural network training.

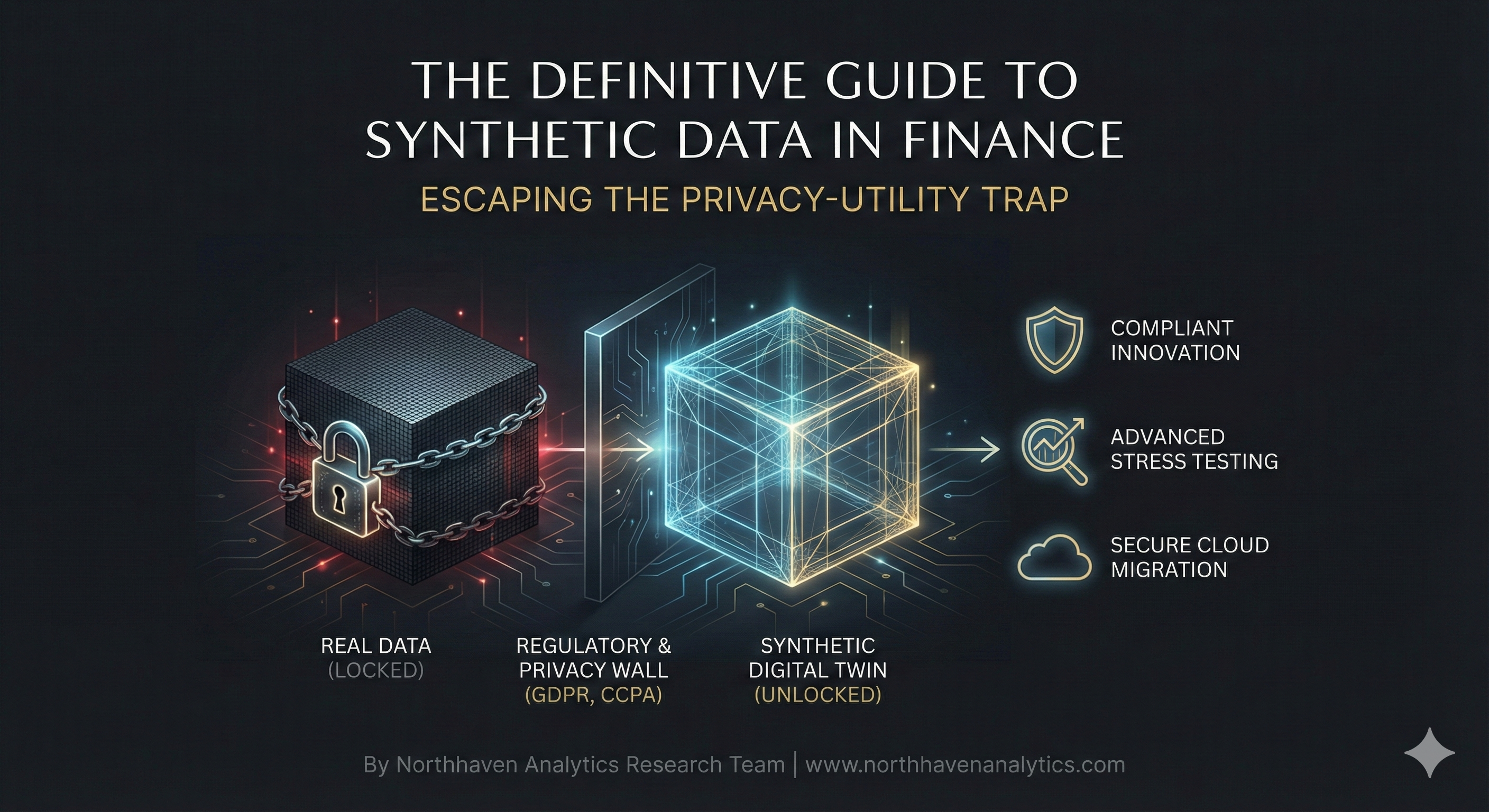

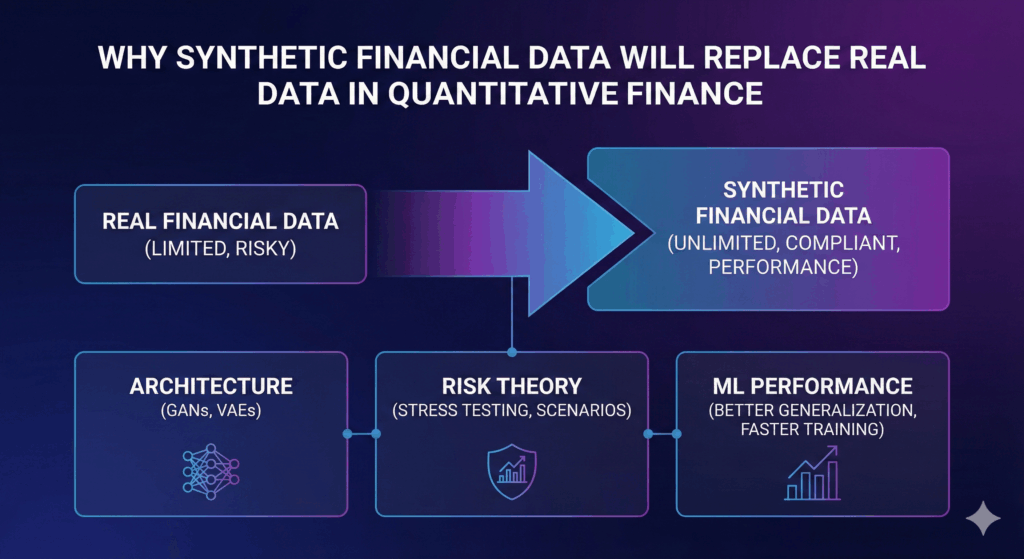

The Northhaven Approach: Generative AI for Imbalanced Data

While SMOTE and undersampling are standard, they have limitations in complex, high-dimensional financial data. Northhaven Analytics takes a different approach: Generative AI.

Instead of simple interpolation, we use Conditional CTGANs to generate synthetic records specifically for the minority class.

- Learn the Distribution: Our model learns the true probability distribution of the fraudulent events, capturing non-linear correlations.

- Generate New Data: We generate thousands of new fraud cases that are statistically distinct from the original samples but valid within the financial logic.

- Balance the Dataset: We combine these high-fidelity synthetic frauds with the real-world legitimate transactions to create a perfectly balanced synthetic dataset.

This method preserves categorical correlations and temporal dependencies that simple resampling techniques often destroy. It allows you to balance the dataset with synthetic minority examples that look and behave like real fraud.

Evaluating Performance: Metrics That Matter for Imbalanced Data

When dealing with imbalanced datasets, standard metrics like accuracy mislead. You must use specific metrics to evaluate the performance on the minority class.

- Confusion Matrix: A foundational tool that visualizes true positives, false positives, true negatives, and false negatives.

- Precision: Of all predicted frauds, how many were actually fraud? (Low precision = too many false alarms, irritating customers).

- Recall (Sensitivity): Of all actual frauds, how many did we detect? (Low recall = missing fraudulent transactions, losing money).

- F1-Score: The harmonic mean of precision and recall, providing a single metric for model quality on the positive class.

- Area Under the Precision-Recall Curve (AUPRC): Often superior to ROC-AUC for highly imbalanced datasets as it focuses heavily on the minority class performance.

- Matthews Correlation Coefficient (MCC): A robust metric that takes into account all four quadrants of the confusion matrix, ideal for imbalanced binary classification.

Challenges and Best Practices for Data Scientists

Handling imbalanced data requires a systematic workflow and adherence to best practices.

- Split First, Resample Later: Never resample (oversample/undersample) before splitting your data into train and test sets. If you do, data leakage will occur, and your model performance on test data will be deceptively high. The test set must represent the true class distribution.

- Understand the Domain: In tasks like fraud detection, a false negative (missed fraud) is expensive, but too many false positives (blocking valid cards) destroys customer experience. The cost-sensitive matrix must reflect business reality.

- Explore Hybrid Methods: Often, a combination of SMOTE (to increase minority) and undersampling (to clean up majority noise) works best (e.g., SMOTEENN).

- Monitor Data Drift: In production, the distribution of classes may change. Continuous monitoring is essential to ensure the model adapts to new fraud patterns.

Conclusion: Mastering the Imbalance

The class imbalance problem is inherent to financial fraud, risk modeling, and rare diseases detection. You cannot wish it away, but you can manage it.

By leveraging sophisticated machine learning techniques like cost-sensitive learning, ensemble methods, and modern synthetic minority generation via Generative AI, data scientists can build predictive models that are both accurate and robust.

Whether you use a balanced random forest, adjust weights inversely proportional to frequency, or deploy Generative AI to balance datasets, the goal remains the same: to see the signal in the noise. Imbalanced data is not a dead end; it is an engineering challenge that Northhaven Analytics solves every day with superior synthetic data infrastructure.

Ready to fix your imbalanced datasets? Explore how our Generative AI creates perfectly balanced financial data for robust model training.